Model failures have cost financial institutions billions in unexpected losses, exposing a hard truth that spreadsheet-era frameworks were never built for today's pace of credit, compliance, or portfolio volatility. The institutions that weathered recent economic shocks were not simply lucky. They had replaced intuition-driven underwriting with data-driven models capable of processing signals that no analyst could track manually. This guide is for risk professionals who want a clear, practical understanding of how analytics improves loan underwriting decisions, satisfies regulatory expectations, and keeps portfolio monitoring sharp, including the real failure modes that can undermine even the most sophisticated programs.

Table of Contents

- Why analytics is critical for modern risk management

- Key analytics methodologies powering risk decisions

- Pitfalls and edge cases: Where analytics can go wrong

- Best practices: Embedding analytics into your risk framework

- A fresh perspective: Why risk analytics is about people as much as data

- Accelerate your analytics transformation with RiskInMind

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Analytics is a compliance essential | Modern risk management relies on robust analytics to meet regulatory demands and manage complexity. |

| Key methods drive accuracy | Machine learning, Bayesian models, and big data tools greatly improve risk predictions if integrated thoughtfully. |

| Human oversight prevents failures | Even the best analytics models can fail—human review and explainability are vital safeguards. |

| Embed analytics for resilience | Risk frameworks should weave analytics, governance, and culture together for lasting success. |

Why analytics is critical for modern risk management

Traditional credit and operational risk frameworks were designed for a slower, more predictable environment. Static scorecards, annual model reviews, and manual exception tracking may have served institutions adequately two decades ago, but the pace of regulatory change, combined with the volume and variety of borrower data available today, has shifted what regulators actually require and what examiners look for during reviews.

The FDIC's model risk management guidance makes this explicit. Institutions are now expected to maintain a complete model inventory, conduct independent validation, and perform ongoing performance monitoring. Analytics is not optional in this context. It is the operational backbone that makes those requirements achievable at scale.

Several forces have made the analytics mandate more acute:

- Speed of lending decisions: Borrowers and commercial clients expect near-real-time responses, which manual review cycles simply cannot deliver without sacrificing credit quality.

- Pandemic-era volatility: The sudden, nonlinear shifts in delinquency rates and business closures in 2020 exposed brittle scenario assumptions and accelerated pressure for dynamic, frequently recalibrated models.

- Regulatory explainability demands: Examiners increasingly expect institutions to trace every credit decision back to a specific model input, making black-box approaches a compliance liability.

- New risk typologies: Cyber exposure, climate-related credit risk, and operational disruptions from third-party vendors have expanded the risk landscape far beyond traditional credit and market categories.

The data tells a sobering story. 75% of institutions cite data gaps as the primary obstacle to full analytics adoption, even as regulators push harder for quantitative oversight. That gap represents both a vulnerability and an opportunity.

"Analytics-driven model governance is no longer a competitive differentiator. It is the baseline for institutional credibility with regulators and counterparties alike."

For institutions exploring AI-powered risk intelligence, the transition from static models to adaptive, data-rich frameworks is where measurable improvements in underwriting accuracy and regulatory defensibility begin. Our financial insights and news consistently reflect this shift as the defining challenge for risk leaders in 2026.

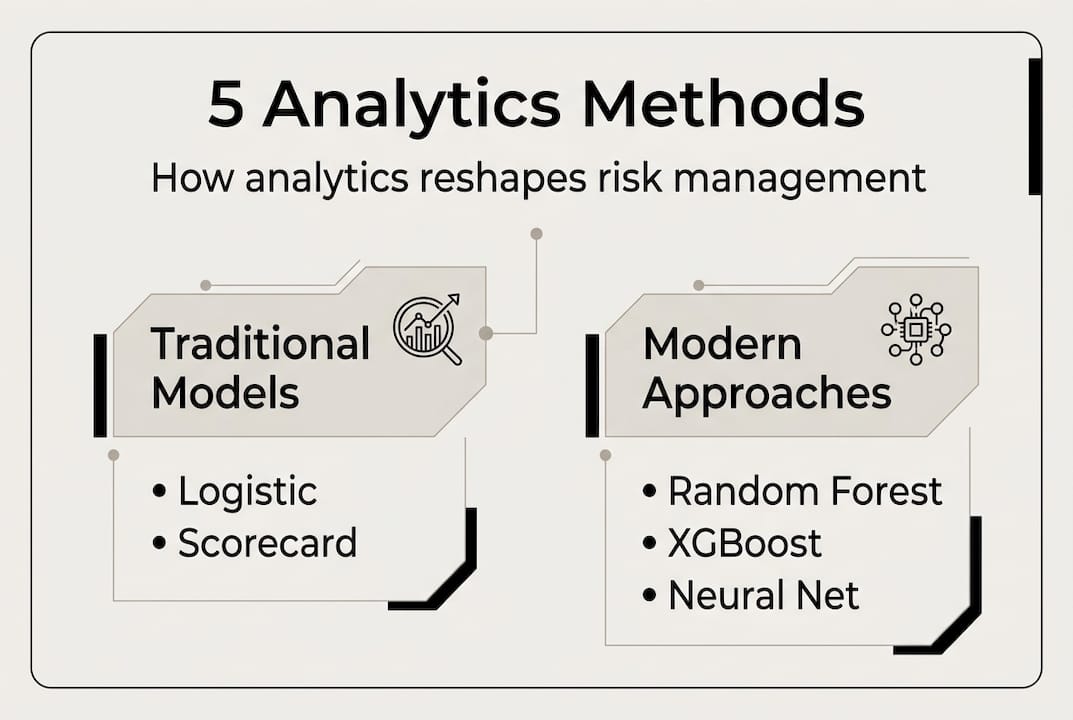

Key analytics methodologies powering risk decisions

With the stakes established, the natural next question is which methodologies actually move the needle. Not every approach fits every institution, and choosing the right combination depends on data availability, model governance maturity, and the specific risk decisions you need to support.

| Method | Primary use case | Key strength | Notable limitation |

|---|---|---|---|

| Random Forest | Credit scoring, PD estimation | Handles nonlinear relationships | Interpretability challenges |

| XGBoost | Loss forecasting, default prediction | High accuracy on tabular data | Requires careful tuning |

| Bayesian Model Averaging | Uncertainty quantification | Robust under data scarcity | Computationally intensive |

| Logistic Regression | Regulatory scorecards | Transparent, auditable | Linear assumptions limit scope |

| Hadoop/Spark pipelines | Big data ingestion, feature engineering | Scales to enterprise data volumes | Infrastructure overhead |

Machine learning techniques like Random Forest and XGBoost, combined with distributed data architectures such as Hadoop and Spark, have demonstrated consistent accuracy improvements over traditional regression-only approaches in both probability of default and loss-given-default estimation. The practical implication for credit risk teams is that these tools can detect borrower stress signals earlier and with greater precision than static scoring alone.

Building a defensible credit risk model follows a clear sequence:

- Define the decision objective clearly (e.g., 12-month PD, behavioral score, or CECL loss estimate) before selecting any methodology.

- Curate and document training data, including vintage, geographic distribution, and any known data quality issues.

- Train and benchmark candidate models against each other and against the existing champion model using out-of-time samples.

- Conduct independent validation with a team that had no involvement in model development.

- Deploy with monitoring hooks that track population stability index (PSI) and model performance metrics on a defined schedule.

For smarter risk management at both the loan and portfolio level, this stepwise discipline separates institutions that sustain analytic gains from those that experience model degradation quietly.

Pro Tip: Require SHAP (SHapley Additive Explanations) or LIME output for every production ML model. These explainability frameworks generate the variable-level contribution reports that both internal audit and regulatory examiners increasingly expect during model reviews. Pairing this with your CECL estimation practices creates a fully traceable documentation chain.

Pitfalls and edge cases: Where analytics can go wrong

No risk tool is perfect. Understanding where analytics can fail is just as important as knowing its capabilities, and overconfidence in a well-performing model is itself a risk factor that examiners have flagged repeatedly.

Concept drift, hidden bias, and poor data quality are the most common culprits behind model misestimation, and they share a notable characteristic: they accumulate silently before producing visible losses or regulatory findings.

| Failure mode | Common cause | Detection method | Prevention strategy |

|---|---|---|---|

| Concept drift | Macroeconomic regime change | Population Stability Index (PSI) | Scheduled recalibration triggers |

| Training data bias | Historical underrepresentation | Disparate impact analysis | Diverse data sourcing and reweighting |

| Feature leakage | Future data included in training | Cross-validation discipline | Strict temporal data partitioning |

| Vendor model opacity | Black-box third-party models | Due diligence documentation | Contractual explainability requirements |

| Stale validation | Infrequent model review cycles | Performance metric tracking | Risk-tiered review schedules |

Best practices for sustained model vigilance include:

- Automated drift detection: Set PSI and characteristic stability index (CSI) thresholds that trigger alerts before performance deteriorates materially.

- Bias audits on production data: Run disparate impact checks on credit decisions quarterly, not just at model development time.

- Complete audit trails: Every model decision should be logged with the inputs, version, and timestamp used, supporting both internal review and regulatory inquiry.

- Independent challenge culture: Model owners should expect their assumptions to be questioned regularly by a validation function that has real authority to delay deployment.

- Third-party model scrutiny: Purchased or vendor-supplied models carry the same regulatory expectations as internally developed ones, and crypto risk management automation contexts have illustrated how quickly opacity in third-party tools can create liability.

Pro Tip: Embed a human-in-the-loop checkpoint at every high-stakes model output, particularly in commercial credit reviews and large loan renewals. The AI risk memo generator approach pairs automated analysis with a structured human review layer, which is the combination that regulators have signaled they want to see. Transforming credit union growth sustainably means ensuring that speed gains from automation never come at the cost of accountability.

Best practices: Embedding analytics into your risk framework

Learning from past failures, the question becomes how to build an analytics program that performs under examiner scrutiny and delivers consistent business value over time. The answer is governance, and it is less glamorous than the modeling itself but far more consequential.

FDIC and SR 11-7 model governance guidance outlines the foundational steps: maintain a complete and current model inventory, conduct independent validation for all models that influence material decisions, and monitor performance on an ongoing basis with documented outcomes. These are not aspirational standards. They are the baseline for passing a model risk management examination.

A practical governance checklist for risk leaders includes:

- Model inventory maintenance: Every model, including vendor tools, should be cataloged with owner, purpose, risk tier, last validation date, and next scheduled review.

- Data lineage documentation: Trace every model input back to its source system, transformation logic, and any known quality limitations.

- Version control for models and data: Production deployments should be version-stamped, with rollback capability and change logs accessible to validation and audit teams.

- Vendor model oversight: Apply the same governance standards to third-party models that you apply to internally built tools, including independent validation and documented performance benchmarks.

- Cross-functional training: Risk, compliance, finance, and business line teams all touch model outputs. Building shared literacy around model limitations reduces the risk of overreliance on numbers that may be misread outside a risk context.

"Documentation is not a bureaucratic burden. It is the institutional memory that protects you when a model underperforms and examiners ask why."

Consistent documentation also accelerates onboarding when personnel change, which is one of the most underappreciated operational risks in model programs. Setting up portfolio manager alerts as part of your monitoring infrastructure gives teams an early warning layer that complements the governance structure. Strong governance, combined with tools designed for preventing loan defaults through continuous signal monitoring, is where analytics programs reach full maturity.

A fresh perspective: Why risk analytics is about people as much as data

Here is an observation that rarely appears in technical papers or vendor presentations: most analytics programs that underperform do so because of organizational dynamics, not model quality. The data science team builds something genuinely sophisticated. The risk committee does not trust it. The business line finds workarounds. The compliance team never fully understood the output. The model degrades quietly while everyone assumes someone else is watching.

The role of cross-team collaboration is often treated as a soft concern relative to model selection, but our experience consistently shows the opposite. Institutions with mature analytics programs invest heavily in communication infrastructure: shared model documentation that non-quants can actually read, regular model review forums that include business stakeholders, and feedback loops that surface when a model's output is being ignored in practice.

AI can surface risk signals with remarkable precision. It cannot set organizational priorities, adjudicate competing incentives, or build the trust that makes people act on an alert rather than dismiss it. Risk maturity means cultivating both the technical capability and the human judgment to use it well. The most resilient institutions we observe are not simply those with the best models. They are those where risk culture and analytical rigor reinforce each other at every level.

Accelerate your analytics transformation with RiskInMind

If these frameworks resonate with the challenges your institution is navigating, RiskInMind was built specifically to accelerate exactly this kind of transformation. Our AI-powered platform streamlines loan application analytics, automates model-driven monitoring for portfolio concentration and delinquency signals, and keeps your institution aligned with evolving mandates through a dedicated regulatory compliance agent.

The platform is SOC 2® certified, processes risk decisions in under half a second, and is purpose-built for credit unions, community banks, and lenders that need enterprise-grade capability without enterprise-grade implementation timelines. Explore what AI-powered risk solutions can do for your program, and request a demo to identify which analytics module fits your institution's most pressing needs first.

Frequently asked questions

What analytics methods are best for credit risk assessment?

Random Forest and XGBoost, combined with big data processing tools like Hadoop and Spark, deliver the strongest accuracy in credit risk scoring and loss forecasting. Pairing these with logistic regression for regulatory scorecards ensures both predictive power and examiner-ready explainability.

How does analytics help meet regulatory compliance?

Analytics enables the model inventory, validation, and monitoring processes that FDIC and SR 11-7 explicitly require, making it possible to demonstrate traceable, auditable oversight of every model that influences a material risk decision.

What are the biggest risks in using analytics for risk management?

The most consequential risks are concept drift, bias, and data quality failures, all of which can accumulate undetected without structured monitoring, automated drift detection, and human oversight at critical decision points.

How often should analytics models for risk be reviewed?

Model monitoring and validation should be ongoing, with formal periodic revalidation cycles determined by each model's risk tier. Higher-risk models influencing large credit decisions warrant more frequent review than lower-stakes operational tools.

Recommended

- Streamline your risk analysis process for better compliance | RiskInMind

- Transforming Credit Union Growth with AI-Powered Risk Intelligence | RiskInMind

- RiskInMind - AI-Powered Risk Management Solutions

- Security Policy - RiskInMind

- Crypto Risk Management Fundamentals: 63% Use Derivatives

- Gestão de risco no trading: 5 dicas para lucrar mais