Financial institutions face a convergence of pressures that traditional risk models were never designed to handle: accelerating regulatory change, expanding credit portfolios, and market volatility that can shift overnight. The old approach of periodic, manual reviews and static scorecards simply cannot keep pace with the volume and complexity of modern risk data. AI-driven frameworks are no longer a competitive advantage reserved for the largest banks. They are quickly becoming a baseline expectation for credit unions, community banks, and lenders that want to stay ahead of credit events, satisfy regulators, and protect their portfolios. This article outlines the core criteria, best practices, and actionable frameworks your team needs to implement AI effectively across underwriting, compliance, and portfolio monitoring.

Table of Contents

- Core criteria for AI-enabled risk management

- Best practices in AI-driven loan underwriting

- Effective compliance and model risk management frameworks

- Optimizing AI for portfolio monitoring and early warning

- The uncomfortable truth about AI in risk management: Power meets scrutiny

- Take the next step: Secure your AI risk management advantage

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI demands oversight | AI models require continuous monitoring, explainability, and alignment with evolving regulations. |

| Hybrid approach wins | Combining AI speed with human judgment delivers best results in underwriting and risk review. |

| Regulatory frameworks evolve | Stay current with new rules like OSFI E-23 and US Treasury AI RMF for robust compliance. |

| Real-time portfolio monitoring | AI enables proactive alerts and actions for portfolio risk, improving early warning capability. |

Core criteria for AI-enabled risk management

Before your institution can benefit from AI in risk management, you need a clear framework for evaluating and governing these systems. Not all AI models are created equal, and the criteria you use to select, deploy, and oversee them will determine whether they add value or introduce new vulnerabilities.

The most important criteria fall into four categories:

- Data quality and governance: AI models are only as reliable as the data they consume. Institutions must establish data lineage, validation protocols, and ongoing data integrity checks before any model goes live.

- Model explainability: Regulators and auditors expect you to explain why a model made a specific decision. Black-box outputs are not acceptable in credit decisions or compliance contexts without supporting documentation.

- Continuous validation: Unlike traditional statistical models, AI models differ from traditional models in opacity, data intensity, and dynamic performance, requiring adaptations like continuous monitoring for drift and enhanced explainability.

- Compliance fit: Every model must align with the regulatory environment your institution operates in, including third-party and vendor-managed solutions.

The black-box effect is one of the most underestimated risks in AI adoption. When a neural network flags a loan application or triggers a portfolio alert, your team needs to reconstruct the reasoning chain clearly enough to satisfy an examiner. This is not just a documentation exercise. It is a governance requirement.

Hybrid human-AI models are the practical answer to this challenge. AI handles the scale and speed of data processing, while experienced risk professionals review exceptions, override edge cases, and maintain accountability for final decisions. Exploring AI risk management approaches across different institutional contexts shows that this balance is consistently where successful programs land.

Third-party AI vendors add another layer of complexity. If your institution relies on an outsourced model for credit scoring or fraud detection, you are still responsible for its performance, bias, and regulatory alignment. Vendor contracts must include model documentation requirements, audit rights, and performance benchmarks.

For institutions also using AI risk strategies in trading or algorithmic decision-making, the same governance principles apply across asset classes.

Pro Tip: Inventory every AI model your institution uses, including those embedded in third-party platforms, and assign an internal owner responsible for ongoing oversight and validation.

Best practices in AI-driven loan underwriting

Loan underwriting is where AI delivers some of its most measurable benefits, and also where the compliance stakes are highest. Getting this right requires a structured approach that integrates AI capabilities without sacrificing transparency or regulatory defensibility.

Here is a practical framework for AI-driven underwriting:

- Expand your data inputs. Traditional underwriting relies heavily on bureau scores and financial statements. AI models can incorporate alternative data such as cash flow patterns, payment history across utility and rent accounts, and behavioral indicators to produce more accurate credit assessments, particularly for thin-file borrowers.

- Build explainability into the model architecture. Do not treat explainability as an afterthought. Select models or supplementary tools that generate reason codes and decision narratives at the point of output, not just in post-hoc reporting.

- Design a hybrid workflow from the start. Human-AI collaboration in fintech prioritizes AI for scale and speed while keeping humans accountable for judgment, edge cases, and compliance oversight in underwriting and monitoring.

- Automate credit memo generation. AI-driven credit memos that document the model's inputs, outputs, and risk flags create a defensible audit trail and reduce manual documentation burden significantly.

- Establish override protocols. Every AI recommendation should have a defined process for human override, with documentation requirements that capture the rationale and outcome for model retraining purposes.

Empirical research supports this direction. A systematic review of 21 studies found that ML models show superior predictive accuracy in credit and fraud risk compared to traditional methods, though real-world scalability and explainability gaps remain active challenges.

"The institutions that get the most from AI underwriting are not the ones that automate the most decisions. They are the ones that design the clearest boundaries between what the model decides and what the human decides."

For institutions ready to operationalize this, AI loan underwriting tools designed specifically for community banks and credit unions can accelerate deployment while maintaining the governance controls examiners expect.

Pro Tip: Use AI-generated credit memos as a standard output for every underwriting decision. They create transparent audit trails and reduce the time your team spends on documentation by a significant margin.

Effective compliance and model risk management frameworks

Compliance is not a destination. It is a continuous process, and AI makes that process both more manageable and more demanding at the same time. Enterprise model risk management (MRM) frameworks must now account for the full lifecycle of AI and ML models, from initial development through retirement.

Two regulatory frameworks are shaping institutional requirements in 2026:

| Control Area | OSFI E-23 | US Treasury FS AI RMF |

|---|---|---|

| Model inventory | Required for all AI/ML | Required across all functions |

| Governance structure | Board and senior management | Governance and accountability |

| Third-party oversight | Explicit requirement | Vendor risk controls |

| Drift detection | Continuous monitoring | Ongoing monitoring protocols |

| Explainability | Documented rationale | Transparency controls |

| Effective date | May 2027 | Operationalize now |

OSFI Guideline E-23 expands the definition of a model to include AI and ML systems, mandating enterprise-wide MRM frameworks with inventory, risk assessment, lifecycle management, and third-party oversight, effective May 2027. Canadian institutions need to begin gap assessments now, not in 2027.

On the US side, the Treasury's FS AI RMF outlines 230 control objectives across governance, data, models, monitoring, and third-party risk for financial AI, operationalized via a Risk and Control Matrix. The breadth of that framework signals where regulatory expectations are heading, regardless of your institution's geography.

Key control objectives every MRM program should address include:

- Explainability standards: Documented model rationale for every credit and compliance decision

- Drift detection protocols: Scheduled and triggered reviews when model performance deviates from baseline

- Data integrity controls: Validation of inputs at ingestion, not just at model output

- Auditability requirements: Full traceability from data input through model output to final decision

For institutions managing CECL estimation or other complex model-dependent processes, these controls are not optional. They are the foundation of examiner confidence. Automating these workflows through compliance automation tools reduces manual burden while maintaining the documentation trail regulators require.

Optimizing AI for portfolio monitoring and early warning

Portfolio monitoring is where AI's real-time capabilities create the most immediate operational value. Static quarterly reviews miss the credit signals that appear between reporting cycles, and by the time a traditional model flags a deteriorating credit, the institution may already be managing a loss rather than preventing one.

AI enables continuous risk assessment using alternative data, predictive analytics for early warnings, and drift detection across the portfolio. This shifts your team from reactive loss management to proactive risk intervention.

| Capability | Manual monitoring | AI-driven monitoring |

|---|---|---|

| Review frequency | Quarterly or annual | Continuous, real-time |

| Data sources | Financial statements, bureau | Alternative data, behavioral signals |

| Alert speed | Weeks to months | Hours to days |

| Coverage | Sample-based | Full portfolio |

| Early warning | Limited | Predictive, threshold-based |

The practical benefits of this shift are significant:

- Speed: AI flags deteriorating credits days or weeks before they appear in traditional reporting cycles

- Coverage: Every loan in the portfolio is monitored continuously, not just the ones selected for periodic review

- Early identification: Predictive models catch behavioral and market signals that precede formal delinquency

- Model performance surveillance: Drift detection ensures that the monitoring model itself remains accurate as market conditions evolve

For a concrete illustration of what early warning systems can prevent, the analysis of lessons from bank failures in 2026 shows how delayed detection consistently amplifies losses that proactive monitoring could have contained.

Institutions that want to move quickly can start with high risk alert tools that layer onto existing portfolio data without requiring a full platform overhaul.

The uncomfortable truth about AI in risk management: Power meets scrutiny

The mainstream conversation about AI in risk management tends to focus on efficiency gains and accuracy improvements. Both are real. But the conversation that does not happen often enough is about what AI adoption actually costs in terms of governance burden, regulatory scrutiny, and organizational accountability.

Black-box opacity challenges validation; agentic AI strains static SR 11-7 assumptions, requiring dynamic validation, and vendor concentration risks are emerging as a systemic concern that many institutions have not fully priced into their risk frameworks.

Many teams also underestimate vendor risk. When a third-party AI provider updates its model, your institution's risk profile changes without a formal change management process. That is a governance gap that examiners are increasingly focused on.

Static checklists are no longer sufficient. Expect regulators to require ongoing, dynamic validation and governance processes that evolve with the model. The institutions that will navigate this environment most successfully are not the ones with the most sophisticated AI. They are the ones with the clearest human oversight structures and the most disciplined documentation practices. Reviewing lessons from real bank failures reinforces this point consistently: governance gaps, not model gaps, are what regulators cite.

Take the next step: Secure your AI risk management advantage

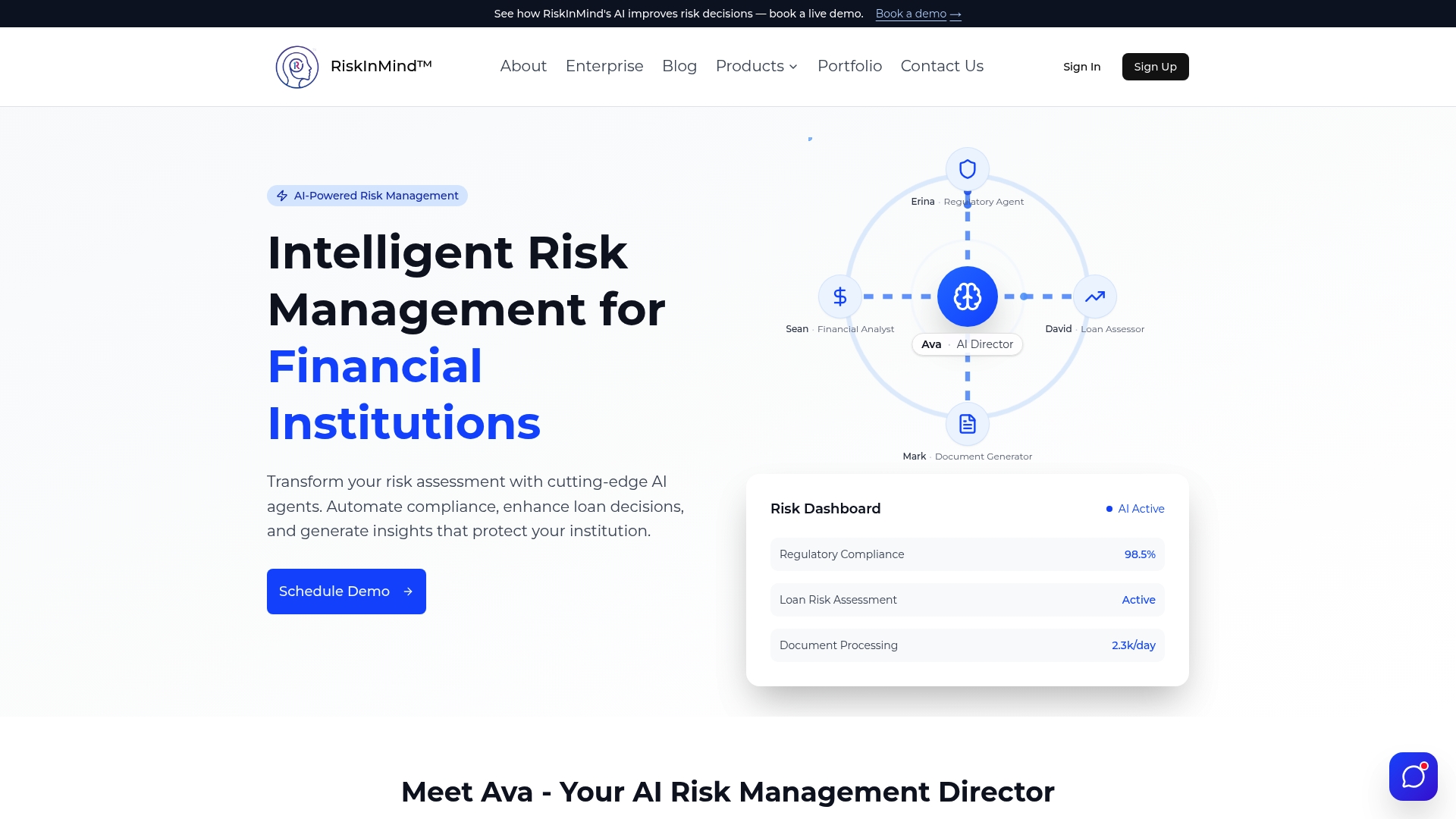

The frameworks and practices outlined here are only as effective as the tools your institution uses to implement them. RiskInMind's AI risk management platform is built specifically for credit unions, community banks, and lenders that need enterprise-grade AI capabilities without the complexity of building from scratch.

From AI compliance automation that maps directly to regulatory control frameworks, to AI loan analysis tools that deliver explainable credit assessments in real time, RiskInMind gives your risk team the infrastructure to act on what you have learned here. SOC 2® certified, bank-grade secure, and processing decisions in under half a second, the platform is designed to meet the governance standards your examiners expect.

Frequently asked questions

What are the biggest challenges in adopting AI for risk management?

AI models differ from traditional models in opacity, data intensity, and dynamic performance, meaning institutions must build continuous monitoring, drift detection, and explainability protocols into their governance frameworks from day one, not as an afterthought.

How do regulations like OSFI E-23 impact financial institutions using AI?

OSFI Guideline E-23 requires enterprise-wide model risk management frameworks for all AI and ML models, including governance, inventory, lifecycle management, and third-party oversight, with an effective date of May 2027 that institutions should be preparing for now.

Are AI underwriting models more accurate than traditional methods?

A systematic review of 21 studies shows ML superior predictive accuracy in credit and fraud risk compared to traditional methods, though limited real-world scalability and explainability gaps remain active challenges that institutions must address in their model governance programs.

What steps should we take to monitor AI models effectively?

AI enables continuous risk assessment using real-time data and predictive analytics, so your monitoring program should include scheduled drift detection reviews, real-time performance thresholds, and regular model audits tied to your MRM governance calendar.

Recommended

- Security Policy - RiskInMind

- RiskInMind - AI-Powered Risk Management Solutions

- RiskInMind Portfolio Manager High Risk Alerts - Sign up for free | RiskInMind

- Stopping the Next 1st Choice: How RiskinMind.ai Helps Credit Unions Catch Trouble Before Failure | RiskInMind

- Top risk management best practices to mitigate currency fluctuations