Most risk management teams still open their morning with a batch report that reflects yesterday's data, and that gap between event and awareness is precisely where regulatory and credit risk accumulates undetected. Real-time risk monitoring changes that equation fundamentally, processing and analyzing transactional, behavioral, and market data continuously so that alerts, compliance actions, and portfolio adjustments happen in seconds rather than hours. This guide clarifies what real-time risk monitoring actually is, separates marketing language from technical reality, and maps the technology, challenges, and regulatory implications that matter most to CROs, compliance officers, and risk analysts at credit unions, community banks, and lending institutions operating under tightening supervisory expectations.

Table of Contents

- Defining real-time risk monitoring in finance

- Core technologies and operational workflow

- Key challenges and practical pitfalls

- Regulatory context and compliance integration

- AI-driven early warning and predictive monitoring

- A practitioner's take: the realities and hidden levers of real-time risk monitoring

- How RiskInMind can elevate your real-time risk monitoring

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Continuous insight | Real-time risk monitoring provides up-to-the-second visibility into potential threats and exposures. |

| Automated compliance | Monitoring connects directly to compliance workflows, ensuring controls and evidence are always current. |

| Actionable alerts | Alerts are routed to decision-makers instantly, enabling rapid, decisive responses instead of slow reviews. |

| AI-driven early warning | Predictive analytics spot risks ahead of benchmarks, giving leadership more time to act. |

| Operational challenges | Deploying real-time systems requires careful attention to event handling, false positives, and integration with governance. |

Defining real-time risk monitoring in finance

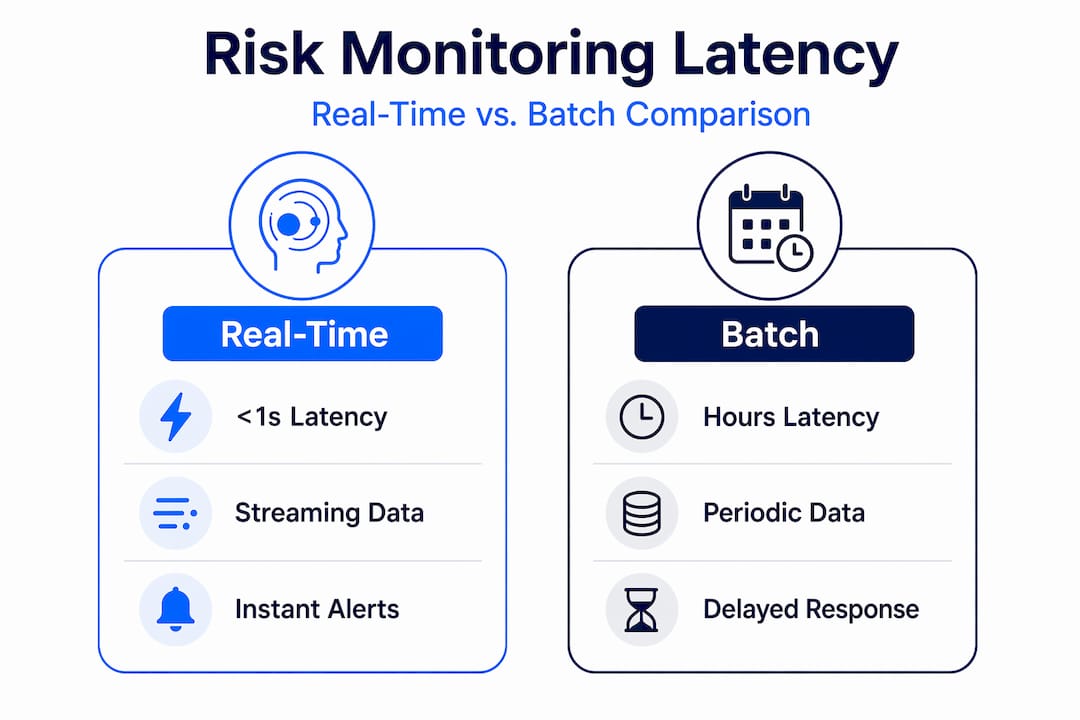

The term "real-time" carries more nuance than most vendor conversations acknowledge. In practice, real-time risk monitoring spans a spectrum: true sub-second streaming at one end, near-real-time micro-batch processing in the middle, and continuous periodic monitoring that regulators sometimes accept as functionally equivalent at the other end. Understanding where your institution sits on that spectrum is the first step toward making sound architectural and governance decisions.

At its core, real-time risk monitoring involves four tightly coupled elements: streaming data ingestion, stateful analytics, automated alerting, and decision-support dashboards. Streaming data ingestion pulls from transaction ledgers, market feeds, borrower activity signals, and third-party data providers without waiting for an end-of-day batch file. Stateful analytics applies rule-based logic and increasingly AI-driven models to compute exposures, flag threshold breaches, and maintain running aggregates such as rolling 30-day delinquency rates or intraday liquidity positions.

As one technical implementation guide notes, streaming data ingestion combined with low-latency processing, aggregation, and dashboard-driven workflows is the standard mechanical architecture for real-time risk monitoring. Technologies like Apache Kafka serve as the event broker, distributing millions of messages per second across consumers, while Apache Flink handles stateful stream processing, computing running risk metrics with millisecond-level latency and correctly handling events that arrive out of order.

A common misconception is that "real-time" automatically means "instantaneous for all risk types." Credit risk assessments involving complex document review, qualitative judgment, or committee approval will never be purely sub-second, even with the fastest pipelines. What real-time architecture delivers is the elimination of unnecessary lag, so that the data feeding those human decisions is current rather than 24 hours stale. Event-time handling, which tags each event with when it occurred rather than when it arrived, matters enormously here because network latency and upstream system delays routinely cause data to arrive out of sequence, and a monitoring system that ignores this produces materially incorrect risk aggregates.

| Monitoring concept | Latency range | Typical use case |

|---|---|---|

| True real-time streaming | Under 1 second | Fraud detection, intraday liquidity |

| Near-real-time micro-batch | 1 to 60 seconds | Credit line utilization, AML scoring |

| Continuous periodic | Minutes to hours | Regulatory capital ratios, stress testing |

| Batch reporting | Hours to days | End-of-day portfolio summaries |

Core technologies and operational workflow

Understanding the technology is not just an IT concern. Decision-makers who grasp the architecture make better vendor selection, budget, and governance choices. The modern real-time risk monitoring stack follows a clear operational sequence, and each stage introduces both capability and potential failure points.

The step-by-step risk analytics workflow for a real-time environment generally proceeds as follows:

- Data capture: Transaction events, behavioral signals, and external market data are published to an event broker such as Kafka the moment they occur, creating a durable, replayable log of all activity.

- Aggregation and analysis: A stateful stream processor such as Flink consumes events, maintains running risk windows (30-day exposure totals, rolling volatility bands), and applies scoring models that recalibrate continuously as new data arrives.

- Alerting and triage: When a computed metric crosses a defined threshold, an automated alert is generated and routed to the appropriate team or workflow, with materiality scoring applied to filter signal from noise.

- Compliance workflow closure: Alerts that meet defined severity levels trigger documented compliance actions, evidence collection, and SLA countdown timers, ensuring that nothing falls through the administrative cracks between detection and resolution.

A streaming-first architecture using continuous ingestion and real-time reporting, with Kafka as the event broker and Flink for stateful stream processing, routinely achieves millisecond-level processing for high-volume financial data. The practical difference for risk teams is that portfolio risk alerts can reach a relationship manager's mobile dashboard within seconds of a borrower's payment failing to post, not at 8 a.m. the following morning.

| Dimension | Batch system | Real-time streaming |

|---|---|---|

| Latency | Hours to days | Milliseconds to seconds |

| Alert accuracy | Based on stale data | Based on current state |

| Intervention cycle | Reactive, next-day | Proactive, continuous |

| Regulatory auditability | Periodic snapshots | Continuous audit trail |

| Infrastructure complexity | Lower | Higher, requires event-time handling |

Pro Tip: When evaluating streaming platforms, prioritize event-time semantics and event-order guarantees over raw throughput claims. A system that processes a million events per second but handles late arrivals incorrectly will produce risk aggregates that overstate or understate exposures in ways that are difficult to audit after the fact.

Key challenges and practical pitfalls

While the technology promises speed and clarity, deploying real-time risk monitoring comes with a distinct set of challenges that institutions must plan for deliberately rather than discover operationally.

Alert fatigue is the first and most corrosive problem. When a system generates thousands of low-materiality alerts per day, risk analysts quickly develop learned helplessness, scanning notification feeds without genuine scrutiny. Edge cases for real-time monitoring, including false positives and noise, require purposeful deduplication logic, correlation engines that cluster related events into a single incident, and materiality scoring frameworks that distinguish between a borrower missing one installment and a borrower showing a coordinated pattern of delinquency across multiple products.

The primary challenges institutions face in production deployments include:

- Data coverage gaps: Not every upstream system is capable of publishing events in real-time. Legacy core banking platforms, for instance, often produce batch files at set intervals, creating blind spots in otherwise streaming pipelines.

- Network latency and upstream delays: Events from external data providers arrive with variable lag, meaning a pipeline without proper late-event handling will misattribute the timing of risk signals and produce incorrect compliance timestamps.

- Materiality scoring calibration: Thresholds set too tightly generate noise; thresholds set too loosely miss material events. This calibration requires ongoing tuning against historical incident data and is not a one-time configuration task.

- Out-of-order event handling: Distributed systems do not guarantee delivery order. A payment reversal event arriving before the original payment event can temporarily make a healthy account appear delinquent if the processing logic lacks proper watermarking.

- AI and ML model drift: Machine learning models that power anomaly detection or credit risk scoring degrade as the underlying data distribution shifts, particularly during economic stress periods when the patterns the model learned no longer hold.

"The moment a risk system stops surfacing actionable signals and starts surfacing volume, it stops functioning as a risk tool and starts functioning as a noise generator. Deduplication and materiality are not optional features."

These challenges are not reasons to avoid real-time monitoring but to architect it deliberately. Consulting automated risk management insights can help institutions design deduplication logic and materiality frameworks before they encounter alert fatigue in production.

Pro Tip: Build continuous model validation directly into your streaming workflow rather than treating it as a quarterly review task. When a model's false positive rate spikes or its recall on material events drops, you want that signal visible in your risk dashboard, not discovered during an exam.

Regulatory context and compliance integration

Since compliance is a pillar of financial services, let's examine how real-time monitoring streamlines both regulatory demands and internal governance.

Supervisory frameworks increasingly expect institutions to demonstrate not just detection but documented, time-stamped response. Compliance-oriented real-time risk monitoring is typically coupled with automated evidence collection, control validation, and SLA-based workflow routing so that material alerts translate directly into traceable compliance actions, not just email notifications. This architecture supports examiners who want to see that the institution knew about a control weakness and acted within a defined timeframe.

At the broader regulatory level, Basel III monitoring uses dashboards and semiannual monitoring frameworks drawing on national supervisor data to analyze risk-based capital, leverage, and liquidity metrics, which means even macro-prudential regulators expect continuous visibility rather than purely annual snapshots. For community banks and credit unions, the practical implication is that internal risk dashboards should be able to produce current-state capital adequacy and liquidity coverage ratio data on demand, not only at quarter-end.

Key compliance workflow patterns enabled by real-time monitoring include:

- Automated generation of Suspicious Activity Report pre-population data from streaming AML alerts

- SLA timers that count from the moment of alert generation to documented resolution, providing a full audit chain

- Control testing that runs continuously rather than on a quarterly sample schedule, surfacing control failures in near-real-time

- Regulatory reporting feeds that draw from the same streaming data store used for operational monitoring, reducing reconciliation errors between internal and external reports

Review compliance monitoring best practices and automating risk assessment resources for a deeper view of how these workflows map to specific supervisory expectations. Institutions navigating complex environments should also consult regulatory compliance risks and compliance tools overview materials to match tool capabilities to exam-ready requirements.

AI-driven early warning and predictive monitoring

As technology advances, AI and predictive analytics are reshaping what proactive risk monitoring can achieve at both the portfolio and systemic levels.

Traditional real-time monitoring tells you what is happening now. AI-driven early warning tells you what is likely to happen in the next 30 to 90 days, giving risk teams lead time to adjust exposure limits, tighten underwriting criteria, or increase loan loss reserves before stress becomes visible in trailing metrics. Empirically grounded predictive models using dynamic surveillance frameworks have been shown to outperform traditional benchmarks and generate lead times over established risk proxies such as the VIX volatility index, which means AI-constructed risk indexes can begin signaling deterioration before conventional market-based indicators respond.

The practical capabilities AI adds to a real-time monitoring program include:

- Dynamic exposure recalibration: Models that update credit risk scores and concentration limits continuously as new payment, behavioral, and macroeconomic data streams in, rather than recalibrating only at monthly model runs.

- Pattern recognition across portfolios: Neural networks that identify correlated delinquency patterns across geographic segments or loan types before those correlations become statistically obvious in aggregate data.

- Scenario bridging: AI models that translate a detected early warning signal into a simulated portfolio stress scenario, giving the CRE Loan Risk Predictor context for how a shift in commercial real estate valuations might propagate through the book.

- Forecast modeling for capital planning: Predictive models that project delinquency trajectories 60 to 90 days forward, enabling more precise provisioning and capital buffer decisions.

The lead time advantage is the most significant practical benefit. A risk index that signals elevated systemic risk weeks before the VIX or credit spread indicators respond gives portfolio managers and CROs time to act deliberately, not reactively.

A practitioner's take: the realities and hidden levers of real-time risk monitoring

With these foundations explained, it's worth stepping back to examine what most teams miss until operational friction emerges and examiner feedback arrives.

The most common error in real-time risk monitoring programs is confusing infrastructure speed with program effectiveness. Institutions invest in low-latency streaming pipelines and announce a "real-time risk capability," then discover within the first quarter that the alerts are too numerous to act on, the models powering them have not been validated for the current rate environment, and there is no defined workflow specifying who does what within what timeframe when a material alert fires. Speed without governance is simply faster noise.

Real-time risk monitoring functions as an end-to-end loop: data ingestion, risk signal computation through streaming and stateful processing, alerting and dashboards, governance through model validation and explainability, workflow routing, and continuous learning and tuning. Breaking any link in that loop degrades the entire program, and the links that most frequently break are model governance and workflow closure, not the streaming infrastructure itself.

The other nuance that experienced practitioners emphasize is that "real-time" can mean very different latencies depending on the risk type and the regulatory framework, from sub-second streaming to near-real-time micro-batch to regulatory-period monitoring. Decision-makers who conflate these in vendor conversations often end up purchasing sub-second infrastructure for use cases where near-real-time would suffice, while neglecting the event-time semantics and model explainability requirements that actually determine whether the program satisfies examiners.

The hidden levers that distinguish high-performing real-time risk programs from underperforming ones are event-time modeling precision, continuous model validation cadence, materiality scoring calibration, and a clearly defined operational closure metric. That last point is critical: measure your program by how quickly a material alert moves from detection to documented resolution, not by how many alerts the system generates per hour. For deeper context on this, reviewing advanced risk assessment methods helps connect technical monitoring architecture to governance and credit decision frameworks.

Pro Tip: Define "operational closure" as a key performance indicator before you go live. Track the time from alert generation to documented risk decision, and use that metric to identify governance bottlenecks rather than infrastructure bottlenecks. Most programs have the latter under control and the former unmanaged.

How RiskInMind can elevate your real-time risk monitoring

The principles outlined in this article are not theoretical. They map directly to the capabilities risk management teams need to operationalize at scale, and that is precisely what we have built at RiskInMind.

Our AI-powered risk management platform delivers sub-half-second processing, SOC 2 certified security, and a suite of specialized AI agents coordinated by Ava, our central AI director, covering regulatory compliance, credit risk, and market analysis simultaneously. The AI Loan Assessor automates credit risk evaluation with real-time data ingestion, while the Bank Statement Analyzer surfaces behavioral risk signals from financial data without manual review cycles. For commercial real estate portfolios, the CRE Loan Predictor provides predictive early warning for concentration and valuation risk. Explore a demo or connect with our team to see how these tools map to your institution's specific monitoring and compliance workflow requirements.

Frequently asked questions

How does real-time risk monitoring differ from traditional risk reporting?

Real-time risk monitoring processes and analyzes data continuously, enabling instant alerts and compliance actions, while traditional risk reporting relies on batch processing and delayed intervention. A streaming-first architecture enables real-time alerting and workflow routing that batch systems cannot match.

What are the most common pitfalls in deploying real-time risk monitoring?

Frequent challenges include high volumes of false positives, network and data latency, ineffective alert deduplication, and poorly defined event-time handling. Edge cases including false positives and unreliable event ordering are consistent pain points that require deliberate architectural and governance responses.

Does real-time monitoring satisfy all regulatory requirements?

It supports compliance automation and SLA-driven incident handling but must be aligned with specific supervisory expectations and evidence-collection mandates. Compliance-oriented real-time monitoring routes material alerts to SLA-based workflows, but the institution must define those workflows to satisfy examiner standards.

Can AI really provide early warning signals in real-time risk monitoring?

Yes, AI-driven models and predictive analytics can detect emerging risks ahead of traditional benchmarks, granting decision-makers vital lead time. Empirically grounded AI models can generate predictive risk warnings with lead time over standard market indices such as the VIX, enabling proactive portfolio management rather than reactive response.