Traditional risk management methods at banks, credit unions, and community lenders were built for a slower, more predictable world. Today, fraud schemes evolve faster than manual review cycles, loan decisions carry more regulatory weight than ever, and portfolio monitoring demands continuous attention that spreadsheets and rule-based systems simply cannot sustain. AI is changing that equation rapidly, delivering measurably better outcomes in fraud detection, credit assessment, and compliance monitoring. This guide lays out where AI produces the clearest gains, how to build the right foundation for it, and what oversight practices separate institutions that succeed with AI from those that struggle.

Table of Contents

- Understanding AI's impact on risk management

- Preparing your data and tools for AI integration

- Step-by-step: Building an AI-driven risk management workflow

- Verifying and monitoring AI for risk compliance and operational losses

- The uncomfortable truth about AI in risk management

- Ready to elevate your risk management with AI?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI boosts accuracy | Machine learning models deliver dramatic improvements in fraud detection and credit risk accuracy. |

| Preparation is critical | Strong data governance and the right tools are prerequisites for safe AI adoption in finance. |

| Transparency protects you | Explainable AI solutions and ongoing performance monitoring help avoid compliance penalties and hidden losses. |

| Continuous oversight needed | Operational losses can increase if AI models run without robust risk controls and regular human review. |

Understanding AI's impact on risk management

Now that you know what's at stake, let's see where AI makes the biggest difference in risk management.

The results that AI produces in core risk functions are not marginal improvements. They represent a fundamental shift in accuracy, speed, and scalability that legacy models cannot replicate. AI risk management strategies adopted by forward-looking institutions show this shift clearly: credit scoring powered by machine learning cuts the default rate from 12% to 6%, raises the approval rate from 65% to 78%, and shrinks processing time from 45 minutes to just 12 minutes. For a community bank or credit union processing hundreds of applications each week, that combination of accuracy and speed is operationally significant.

Fraud detection and anti-money laundering are equally compelling. Manual review teams depend on fixed rules and after-the-fact reporting, which gives fraud actors time to complete transactions and move funds. AI models, by contrast, analyze patterns across thousands of variables in real time. Advanced gradient-boosted classifiers like XGBoost achieve 98.2% accuracy with a 93.3% F1-score in fraud detection tasks, vastly outperforming traditional logistic regression approaches that financial institutions have relied on for decades.

The benefits also extend to operational risk and compliance monitoring. AI systems can parse large regulatory documents in seconds using natural language processing, flag emerging compliance gaps, and generate structured reports that would take a compliance team hours to produce. For institutions exploring risk management best practices that go beyond checkbox compliance, AI represents a genuine shift in how risk culture is operationalized.

Key areas where AI outperforms traditional methods:

- Credit underwriting: Faster, more consistent decisions with demonstrably lower default rates.

- Fraud and AML detection: Real-time anomaly flagging with accuracy rates exceeding 98%.

- Regulatory text analysis: NLP models extract obligations and changes from complex regulatory documents automatically.

- Portfolio monitoring: Continuous surveillance of loan book health without manual batch processing.

- Operational risk scoring: AI models identify system vulnerabilities and behavioral signals that precede operational failures.

Understanding forex trading risk strategies also illustrates that high-velocity, data-rich environments reward AI adoption most aggressively, a lesson directly applicable to institutional lending and portfolio risk contexts.

Pro Tip: Before benchmarking AI against your current systems, document baseline performance metrics for fraud catch rate, underwriting accuracy, and processing time. This creates a factual foundation for measuring AI's actual impact at your institution.

Preparing your data and tools for AI integration

With the major benefits in mind, let's cover the building blocks you need before launching your AI risk program.

Effective AI begins with data quality, not with model selection. A sophisticated machine learning model trained on incomplete, inconsistent, or biased data will produce unreliable predictions and expose your institution to both credit losses and regulatory criticism. Before selecting any AI platform or model, your team should audit existing data pipelines for completeness, accuracy, and historical depth. Loan origination data, payment history, behavioral signals, and macroeconomic variables all feed into robust credit and fraud models. Gaps in any of these layers degrade model performance in ways that are often invisible until losses materialize.

Governance is equally non-negotiable. Under regulatory frameworks like the EU AI Act, pipeline AI systems require tracing data flows for high-risk classification, applying data governance standards to upstream components throughout the entire pipeline. Even if your institution operates primarily under U.S. regulatory frameworks, the principles translate directly. Any AI system making consequential decisions about credit, fraud, or compliance must be auditable, and that auditability starts at the data layer.

The following comparison illustrates how preparation investments affect downstream AI performance:

| Preparation factor | Underprepared institutions | Well-prepared institutions |

|---|---|---|

| Data completeness | Partial records, inconsistent fields | Standardized, validated datasets |

| Governance documentation | Informal or absent | Formal lineage and audit trails |

| Model explainability | Black-box outputs | SHAP/LIME integrated from deployment |

| Regulatory readiness | Reactive post-audit | Proactive, continuously documented |

| Retraining protocols | Ad hoc or infrequent | Scheduled, version-controlled cycles |

The right tools for this stage include data quality platforms that flag missing values and inconsistencies, model management solutions that handle versioning and rollback, and explainability frameworks such as SHAP (Shapley Additive Explanations) and LIME (Local Interpretable Model-agnostic Explanations). These are not optional add-ons. Regulators and examiners increasingly expect financial institutions to explain how automated decisions were reached, and explainability tools are the mechanism that makes that possible.

Guidance on automating risk assessment at financial institutions consistently emphasizes that automation built on poor data infrastructure amplifies existing weaknesses rather than correcting them. A strong risk analytics step-by-step framework builds the data foundation first, then layers in model sophistication.

Pro Tip: Set up audit trails and version controls from day one of your AI integration project. Retroactively applying governance documentation after model deployment is significantly more expensive, time-consuming, and regulatorily risky than building it into the initial architecture.

Step-by-step: Building an AI-driven risk management workflow

Once your foundations are ready, you can start building your automated risk processes.

The workflow that produces reliable AI risk outcomes follows a consistent structure, regardless of whether you are targeting fraud detection, credit underwriting, or compliance monitoring. Skipping steps or compressing timelines is where most implementations run into trouble.

-

Map your risk priorities. Identify which risk categories, fraud, loan underwriting, portfolio delinquency monitoring, or regulatory compliance, offer the highest return on AI investment given your institution's scale and exposure profile. Not every use case is equally ready for automation on day one.

-

Source and structure your data. Pull historical data from core banking systems, loan origination platforms, and third-party credit bureaus. Clean, normalize, and validate before any model touches it. Document data lineage at this stage.

-

Select your model architecture. Gradient-boosted models like XGBoost perform well for structured tabular data common in credit and fraud applications. Neural networks add value for high-dimensional behavioral and transaction data. Choose based on interpretability requirements as well as accuracy targets.

-

Train and validate with fairness in mind. Split your dataset appropriately, train on historical outcomes, and test rigorously for both predictive accuracy and demographic fairness. Regulatory agencies expect models to demonstrate equitable treatment across protected classes.

-

Apply explainability frameworks before deployment. Integrate SHAP or LIME outputs as part of the model's standard decision output, not as a post-hoc diagnostic tool. This directly supports compliance because, as research confirms, AI enhances RegTech monitoring but requires explainability frameworks and fairness metrics to avoid fines and examination findings.

-

Deploy with monitoring infrastructure in place. Set up dashboards that track prediction accuracy, data drift, and flag volume in real time. Define threshold alerts that trigger human review when model confidence drops below acceptable levels.

-

Document every model change. Retraining events, threshold adjustments, and feature additions must be logged with version control. Regulators treat undocumented model changes as governance failures.

"Accurate credit models reduce default rates to 6% and cut decision time to 12 minutes, but only when fairness metrics and explainability are embedded from the start, not bolted on after examination pressure." Research data confirms these documented credit scoring outcomes are achievable for institutions that follow structured deployment practices.

Connecting this workflow to your existing compliance obligations requires ongoing attention. The machine learning credit assessment approaches that produce the strongest results are those built around modern credit risk modeling frameworks that treat explainability and fairness as design requirements, not afterthoughts. Regulatory expectations around financial compliance continue to tighten globally, making this investment in structured workflow design a strategic necessity.

Pro Tip: Use SHAP values not just for examiner-facing documentation but for internal model tuning. SHAP outputs often reveal that a model is placing unexpected weight on proxy variables that could introduce regulatory or fairness risk.

Verifying and monitoring AI for risk compliance and operational losses

Deploying AI models is not the end. Regular oversight is crucial for keeping risk in check.

One of the most counterintuitive findings in applied AI risk management is that deploying AI without robust monitoring frameworks can actually increase operational losses. Research published by the Federal Reserve Bank of Boston demonstrates that AI investments increase operational losses in banks, particularly from external fraud, client issues, and system failures, when not paired with strong risk management governance. The implication is direct: the technology does not manage itself, and overconfidence in model outputs creates blind spots that bad actors and system failures exploit.

"The institutions that sustain strong AI risk outcomes are those that treat model monitoring as an ongoing operational discipline, not a post-deployment afterthought."

Continuous validation routines should track several distinct dimensions of model health. Concept drift, where the relationship between input variables and outcomes shifts over time due to economic or behavioral changes, is one of the most common causes of model degradation. Accuracy drift, where the model's predictive performance simply declines as it ages, is equally important to measure. Anomaly detection at the monitoring layer, watching for sudden spikes in model rejection rates, unusual approval patterns, or unexpected fraud flag volumes, provides early warning of both external manipulation and internal system failures.

Practical monitoring elements your institution should maintain:

- Real-time dashboards that surface fraud flag volumes, approval rate shifts, and model confidence scores.

- Automated drift alerts that trigger human review when feature distributions shift beyond defined thresholds.

- Audit log integration that connects model decisions back to individual loan files or transaction records for examiner access.

- Scheduled backtesting against recent outcome data to confirm that model accuracy remains within acceptable bounds.

- Edge case simulation that stress-tests the model against unusual but plausible scenarios, such as rapid credit deterioration or coordinated fraud patterns.

Understanding AI and operational risk controls within a real-world institutional failure context makes clear that monitoring gaps are not theoretical risks. The same discipline applies to fraud: robust AI-based fraud controls require continuous calibration, not one-time configuration.

Pro Tip: Simulate edge cases at least quarterly and document all model changes with timestamps, rationale, and approval authority. During regulatory examinations, this paper trail is often the difference between a finding and a satisfactory review.

The uncomfortable truth about AI in risk management

Most AI risk management playbooks focus on model selection, accuracy benchmarks, and integration timelines. They rarely address the organizational reality that determines whether any of it actually works.

The institutions that fail with AI risk programs almost never fail because of the technology. They fail because they deploy sophisticated models on top of weak risk cultures, expect automated outputs to substitute for informed judgment, and do not build the cross-functional accountability structures that keep AI outputs honest. A model flagging 98% of fraud accurately is still a serious problem if no one owns the 2% miss rate or questions why flag volumes are climbing quarter over quarter.

Explainability and transparency matter for reasons beyond regulatory compliance. When risk officers, loan officers, and credit committee members can see why a model made a particular recommendation, they engage with it critically rather than deferring to it automatically. That critical engagement is what catches the edge cases that training data never anticipated. It is also what builds the institutional confidence in AI outputs that leads to sustainable adoption rather than periodic backlash from teams who feel replaced rather than supported.

High-performing institutions treat AI as a capability multiplier within a strong governance structure, not a replacement for human judgment at critical decision points. Our view at RiskInMind is that the most durable AI risk programs are those where proven AI risk practices are embedded into performance management, model ownership is assigned explicitly, and cross-functional review cycles are non-negotiable. The technology raises the ceiling on what your risk team can accomplish. The culture and governance structure determine whether you ever reach it.

Ready to elevate your risk management with AI?

The steps in this guide reflect what leading financial institutions are putting into practice right now, and the gap between early adopters and institutions still relying on manual review processes is widening every quarter.

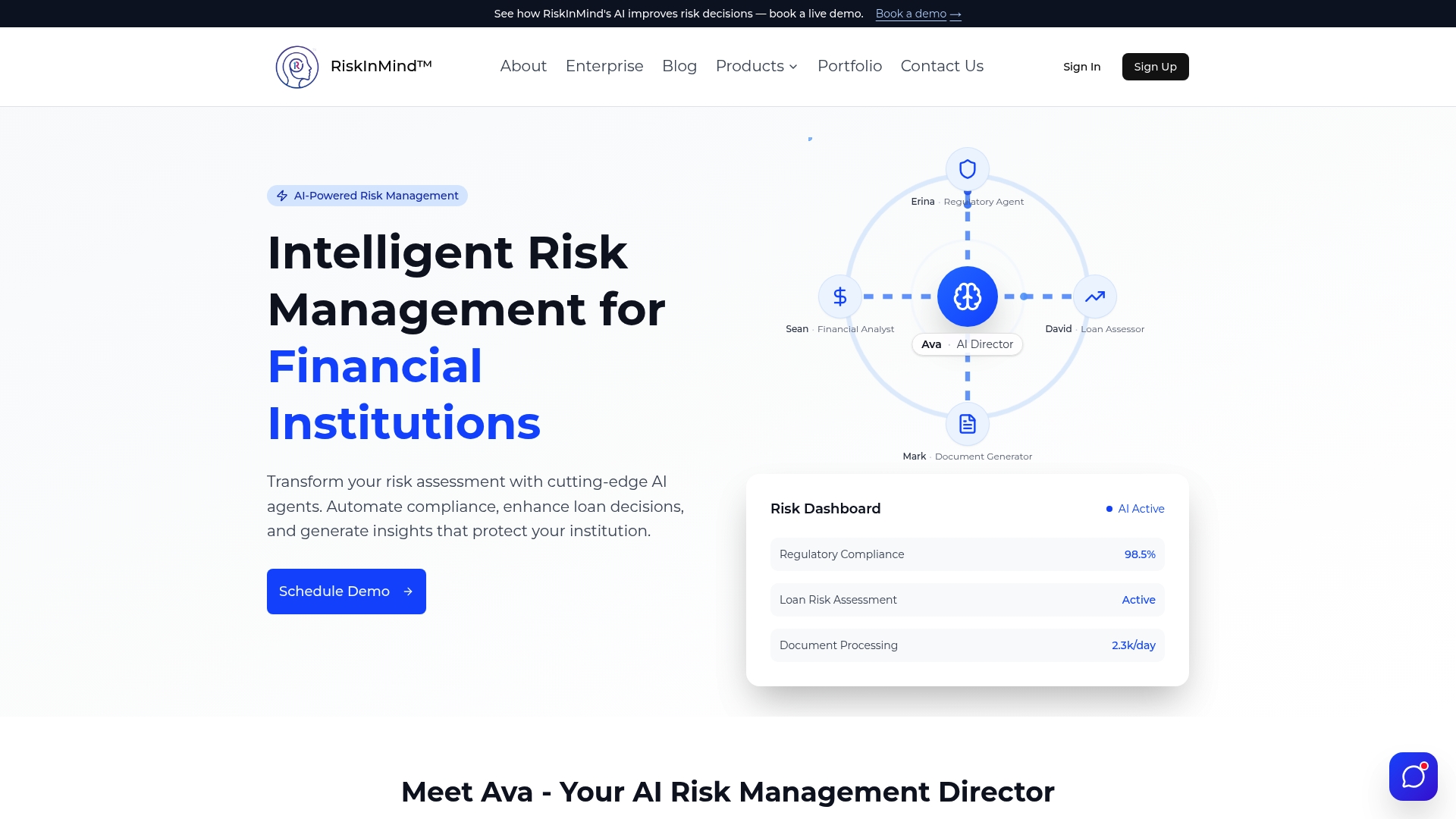

RiskInMind's AI platform is purpose-built for credit unions, community banks, and institutional lenders that need enterprise-grade risk capabilities without the complexity of building from scratch. From the loan underwriting AI that cuts decision time and default rates simultaneously, to the AI regulatory compliance agent that monitors regulatory changes and generates documentation in real time, every tool in the platform is designed for the risk professionals who carry accountability for these outcomes. Explore the full AI risk management platform to see how Ava and her specialized AI agents can integrate with your existing workflows and deliver measurable results.

Frequently asked questions

How does AI improve fraud detection compared to traditional methods?

AI models like XGBoost analyze thousands of behavioral and transaction variables simultaneously, achieving 98.2% detection accuracy with a 93.3% F1-score, far surpassing the fixed-rule, post-event approach of traditional fraud review systems.

What are the main data requirements for effective AI in risk management?

High-quality, complete, and well-governed data is non-negotiable. Regulatory frameworks like the EU AI Act require that pipeline AI systems trace data flows for high-risk classification, meaning data governance must be built into every upstream component of your AI architecture.

Can AI-based risk monitoring increase operational losses?

Yes, and this is frequently underappreciated. Federal Reserve Bank of Boston research confirms that AI increases operational losses in banks when not paired with robust monitoring governance, particularly from external fraud and system failures that automated systems miss or create new vectors for.

How does explainable AI help with regulatory compliance?

SHAP and LIME frameworks surface the specific variables driving each model decision, which satisfies examiner expectations for model transparency. Research confirms that explainable AI and fairness metrics are required to avoid regulatory fines when using AI for credit and compliance applications.

Which areas in banking benefit most from AI risk management?

Fraud detection, loan underwriting, regulatory compliance monitoring, and portfolio surveillance all produce measurable improvements with AI adoption, though the most dramatic results appear in fraud detection accuracy and credit underwriting speed and precision.